The need for timely access to authoritative data for robust decision making has been one of the key lessons from the unprecedented upheavals wrought in Canterbury by the 2010-2011 earthquake series. In the pressure cooker environment of post disaster decision making we truly reap the rewards of a fully implemented Spatial Data Infrastructure

Arguably, quality metadata is as important as the data itself, if not more-so. Metadata enables the user to establish the veracity of the dataset and assess the suitability for an intended use.

The ability to clearly indicate the status of the custodian, steward, legality, contact details and spatial and temporal accuracy are all part of ISO metadata.

This is especially important when we publish our data to other agencies and the public realm using self-help facilities such as data.govt.nz.

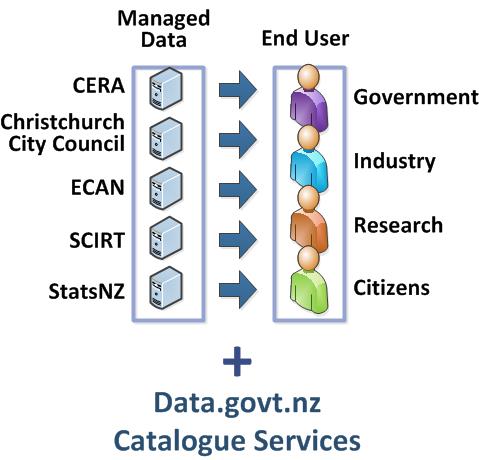

Caption: Organisations such as CERA, Christchurch City Council, ECAN, SCIRT, and StatsNZ manage data using best information practices. The data.govt.nz catalogue services make the data discoverable and consumable by end users such as government, industry, researchers, and citizens.

As users we should expect metadata to at least conform to ISO metadata standards. We should actively seek to discover and understand the dataset before we use that data for our own purposes.

As originators and publishers into the public realm, Data managers need to ensure that metadata is part and parcel of the publication and maintenance of any dataset. There are a range of tools available to enable that process; indeed all major GIS systems have a metadata editor as part of their standard toolset.

Metadata must be bundled with the dataset to ensure that the provenance is carried forward as data is used and reused, combined and extracted into other data products.

Of course this principle is not limited to geospatial data, however geospatial can often provide a means for an agency to move into the open and public data space without having to necessarily confront many of the issues touted as roadblocks, privacy among them. In this way the rich potential of our government data is unlocked for the benefit of all.