At the end of October, the Govt.nz team kicked off our latest pilot looking at NZ citizenship and how to get documents authenticated. We’re working with Service Delivery and Operations — part of our family here at the Department of Internal Affairs (DIA) — to redesign the content around user needs while shifting it from the DIA website on to www.govt.nz.

Understanding the issues

As always, we began by identifying the problems users have with these services, and the most common things people need from the website.

Looking at the numbers

To understand how the current website is performing, we looked at analytics for the last year and we found:

- which pages are the most visited on the website and which have almost no views

- common citizenship search terms through the Google Trends and AdWords keyword planner tools; then we checked to see what results they returned in Google

- that some of the terms like ‘citizenship ceremony’ and ‘citizenship application’ which people search for in Google don’t have corresponding pages currently on the DIA website; the answers people are looking for are actually on the DIA pages, but it’s not easy to find them.

Which link would you pick if you wanted to know how to apply?

The Authentications and Apostille section of the DIA website generates a high number of searches within the website. This shows that people aren’t finding what they want either on the page in front of them or by browsing the website.

Finding out what's already out there

After getting the popular search terms, we put ourselves in the users’ shoes and Googled all the terms to see what came up. This gave us a picture of what’s out there now: mostly DIA content, with a mix of embassies and immigration forums chipping in. We also audited the existing DIA site so we know exactly what’s there at the moment.

Online testing

We needed some feedback about current website performance so we commissioned some remote usability tests through User Testing. We asked the testers to complete basic tasks on the website and record their experience.

The limitations of this panel were that the people involved in testing were not actual customers and they were all fairly experienced with computers and the web. It was also difficult to test comprehension — sometimes users said they had finished the task but it was hard to know how much they’d understood. However we found some clear issues across all the users.

People had trouble finding answers because they didn’t understand how the product names applied to their situation. We saw that ‘citizenship by grant’, which is a product name, was one of the main navigation options on the home page. But it meant nothing to users so they didn’t click on it. This is backed up by analytics — most people aren’t searching ‘citizenship by grant’ so it confuses them to see it on the navigation.

We also saw some very frustrated users trying to enter travel dates into the citizenship eligibility calculator. On average it took 7 minutes to check eligibility using the calculator. We heard comments like “this website is horrible!”.

The test measured:

- the time it took to complete each task

- whether users thought they were successful

- how difficult they found it, and

- users’ ratings for how easy or difficult the website was to use overall.

We’ll do this again when we’ve finished in order to measure the difference we’ve made.

Remote testing was an effective, fast way of getting feedback, but we’ll make sure we supplement it with feedback from real customers.

Contact centre

Talking to customer support staff is always great because they can tell us what problems people are having and which problems affect the most people. We had a workshop with the citizenship contact centre staff and found out that people are mostly calling to:

- find out if they’re eligible

- make appointments for interviews

- check on their application

- find out processing timeframes, and

- see when their ceremony will be.

The contact centre also get lots of questions about passport applications, as well as visas and residency statuses — most of which they can’t actually help with.

This once again shows us that if we only look at things through an organisational lens, we wouldn’t see our users’ complete journeys.

How we’re working

The start of the pilot coincided with the launch of the Government Information Services new way of doing things as part of DIA's Trusted Government Information Portfolio. Our way of working for this pilot is a test run for how the portfolio delivery teams will function.

While we’ve run our Discovery Phases in agile sprints before, we’ve always had a bit of a weak spot transitioning to delivery mode. To help with this, we used a couple of techniques to understand what we need to do. This enabled us to come up with a backlog for the project.

A team with everyone on it

Previously, the development team and the content team have been separate, and lining up the dependencies between them has been difficult. Now, we have a pilot team with people representing content, development, testing and design and we use the Scrum framework to work together.

We’re not sure yet how this will work for content production, but have retrospectives every two weeks to reflect on how we’re working so that we can adapt if we need to.

We also have our subject matter experts from the Citizenship and Authentications teams sitting with us as part of the team. This is great because:

- we have constant questions for them, and

- reviewing content is often given to people on top of their regular work, which slows down delivery.

The team at work.

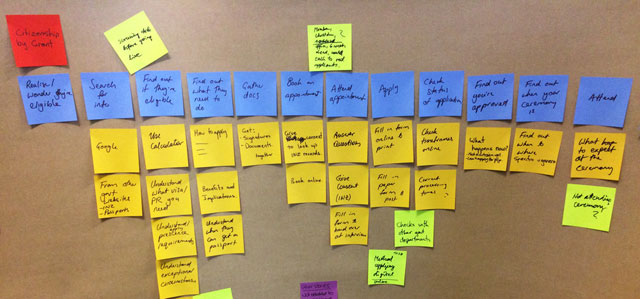

User journeys

To help us get the perspective of users, we mapped their journeys through the citizenship and authentications processes. This made us think about all the work users are doing throughout the process, as well as when they do things online.

It also made us think about the motivations for using these different products: Who needs an Apostille for their birth certificate? Who’s registering for citizenship by descent and when?

Mapping user journeys helps us fit products into goals like ‘settle permanently overseas’ or ‘get a first passport for my baby so we can visit family for Christmas’.

Story mapping

Once we’d written the user journeys, we created our backlog using a process called story mapping. We looked at each step in the user journeys to see what pieces of work we could do to support it and these became our user stories.

For example, in the user journey to prove documents are legitimate:

- Find out what I need to do

- As a person wanting a document authenticated, I want to know how to apply so that I know what I need to do.

- As a person wanting a document authenticated, I want to know which documents I need notarised so that I can get copies of them.

- As a person wanting a document authenticated, I want to know where I can get documents notarised so that know where to go.

Our story map for citizenship by grant.

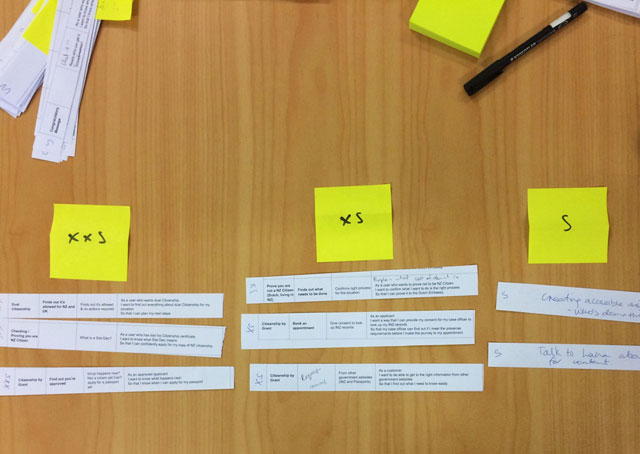

Sizing

To make decisions about what to do first, we needed to gauge the size of different bits of work.

Our initial sizing experience consisted of us getting in a room and laying out the user stories on a spectrum from very big to very small. The chosen size was based on the complexity, number of people involved and how much we already knew about it, rather than how long it might take.

As we deliver these stories, we’ll have more and more information about how long things take. This means we’ll be continually adjusting and re-prioritising instead of trying to get it precise the first time.

Stories ranging from extra extra small to small.

What’s next

Now that we have our backlog we’ll prioritise it in order of importance and group it into logical chunks to work on. Because we spent less time doing discovery work upfront than we usually would, we’ll be doing lots more prototyping and research throughout delivery. We’ll also be talking to people going through the citizenship process to make sure that we understand and meet their needs.